Description

In

this project, we explore an approach to generating detectors

that is different from the conventional approach of learning

a detector from a large corpus of annotated positive and

negative data samples. Instead, we assume that we have

evaluated "off-line" a large library of detectors against a

large set of detection tasks. Given a new target task, we

evaluate a subset of the models on samples from the new task

and use the matrix of models-tasks ratings to predict the

performance of all the models in the library on the new

task, enabling us to select a good set of detectors for the

new task. This approach has three key advantages of great

interest in practice: 1) generating a large collection of

models in an unsupervised manner is possible; 2) a far

smaller set of annotated samples is needed compared to the

size of the training sets required for training from

scratch; and 3) recommending models becomes a very fast

operation compared to the notoriously expensive training

procedures of modern detectors. (1) will make the models

informative across different categories; (2) will

dramatically reduce the need for manually annotating vast

datasets for training detectors; and (3) will enable rapid

generation of new detectors.

System

Pipeline

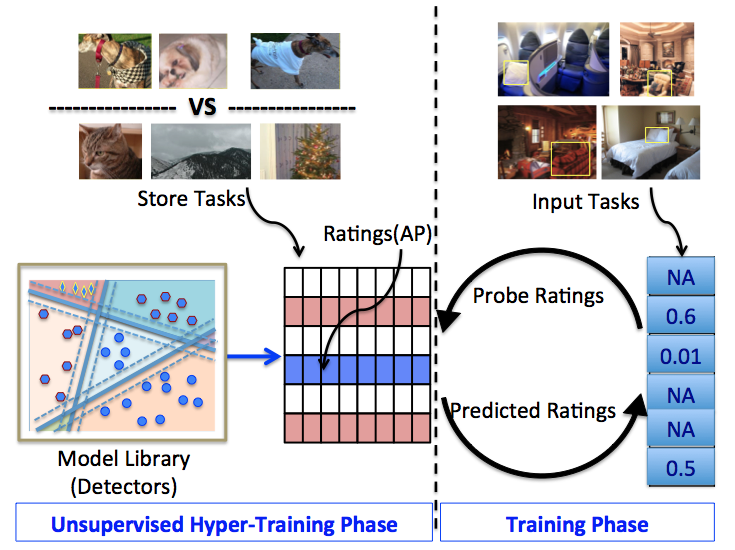

During unsupervised hyper-training phase, a large library

of object detectors informative across categories is

generated. Their ratings on different detection tasks are

recorded to form a ratings store. For a new target task or

category, using ratings of a small probe set of detectors

on its input task with limited samples, recommendations

are made by collaborative filtering. A usable object

detector for this new task is thus rapidly generated as

single or ensemble of the recommended models.

Representative

Performance

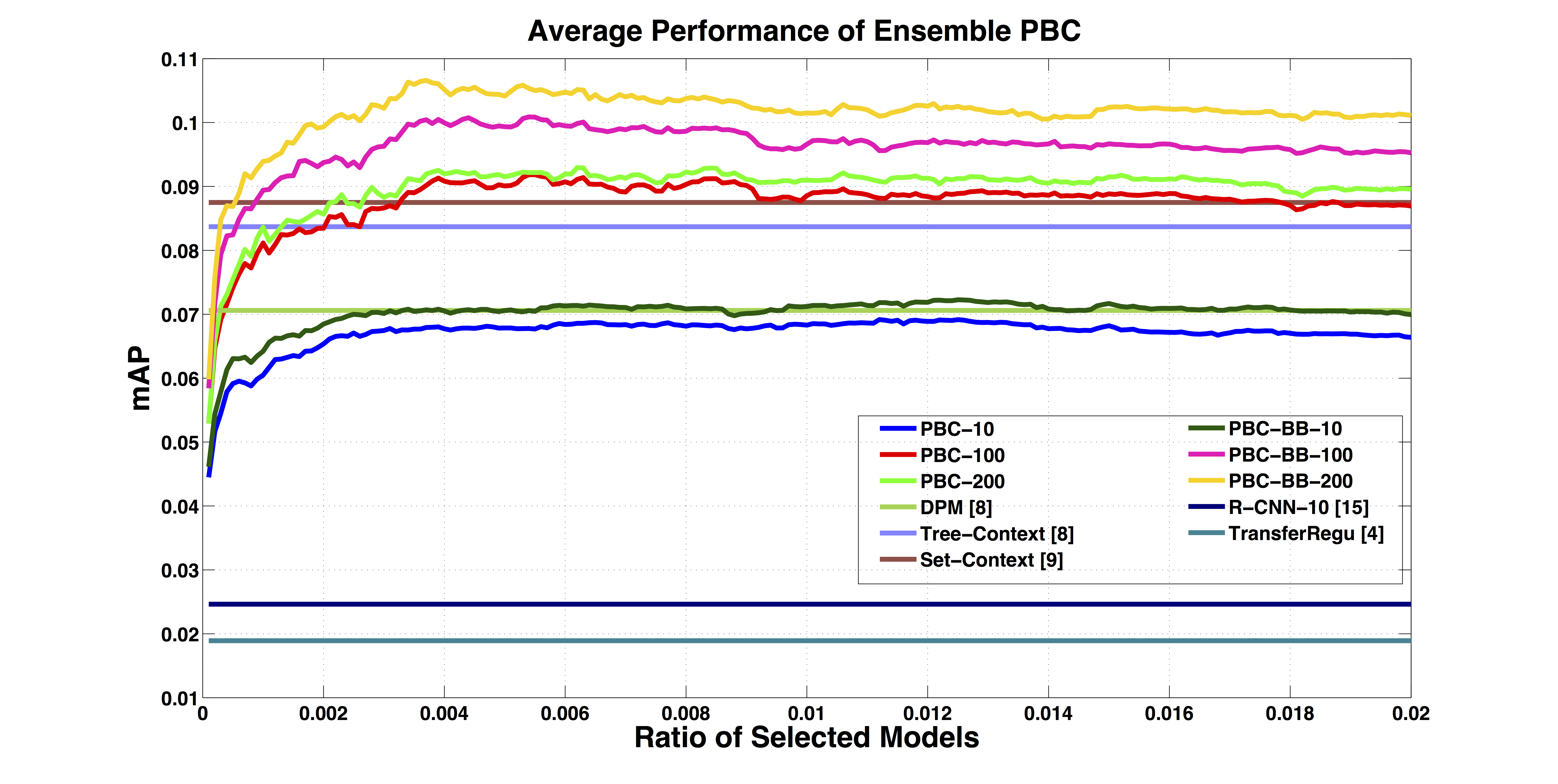

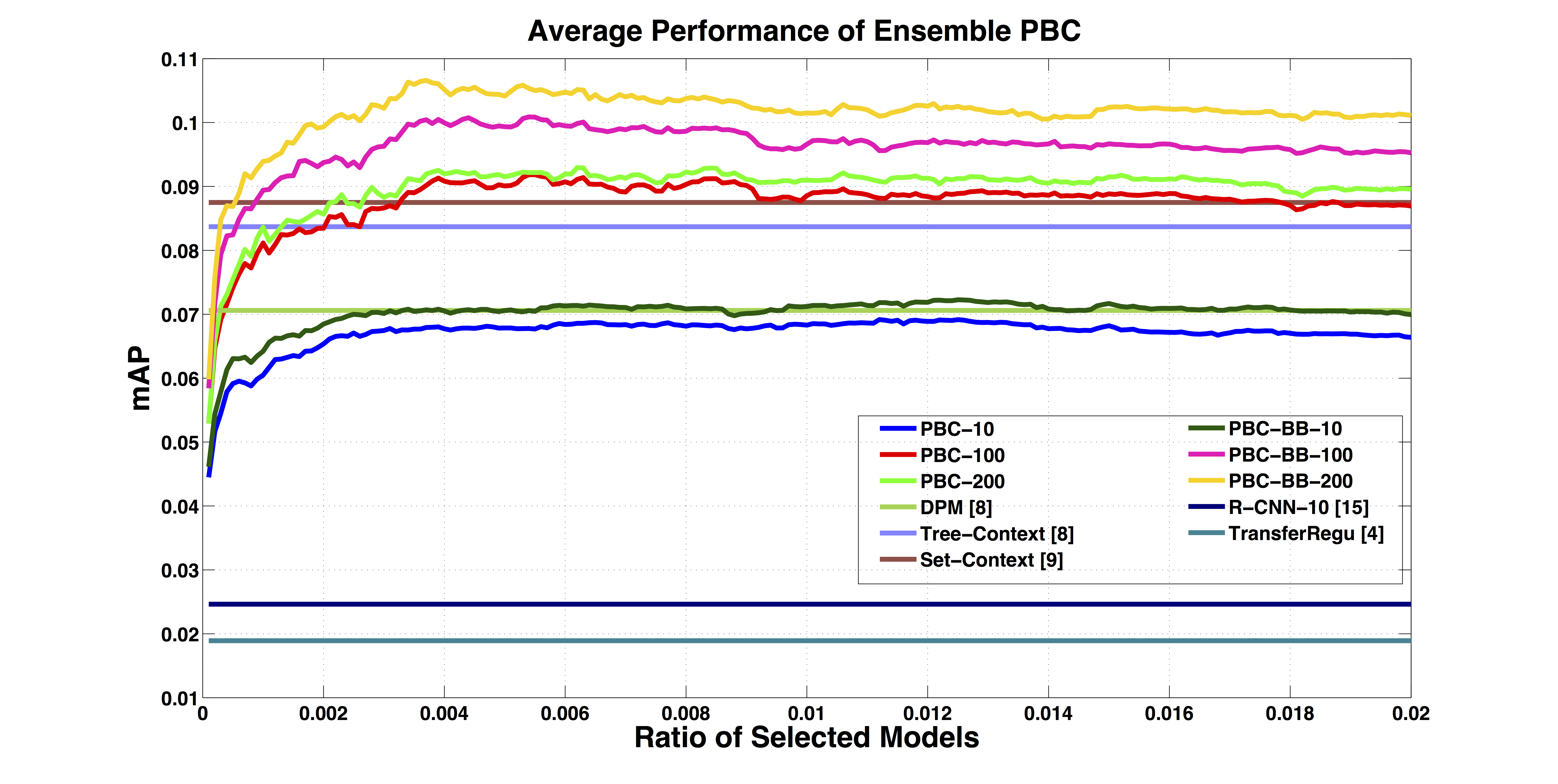

Average

performance of ensemble PBC model recommendation with

varied input task size over 107 categories on the SUN

dataset. Ensemble of PBC models works consistently well as

the size of the recommended model increases, as shown in

the six curves. Using only a small number of images to

select models generated from out-of-domain dataset by

unsupervised hyper-training, it achieves comparable or

better performance to several strong baselines with

in-domain data by supervised training.

Model

Visualization

The

same model is informative across different tasks. For five

representative models (left to right), we show detection

on sample images (top to bottom). Note that the models

learned by unsupervised hyper-training are similar to

attribute detectors. For example, the first column

corresponds to all staircase -like objects with vertical,

horizontal, inclined, curved orientations (bottom to top).

This attribute-like behavior explains the generality of

the models for new input tasks.

Continuous

Category Space Discovery

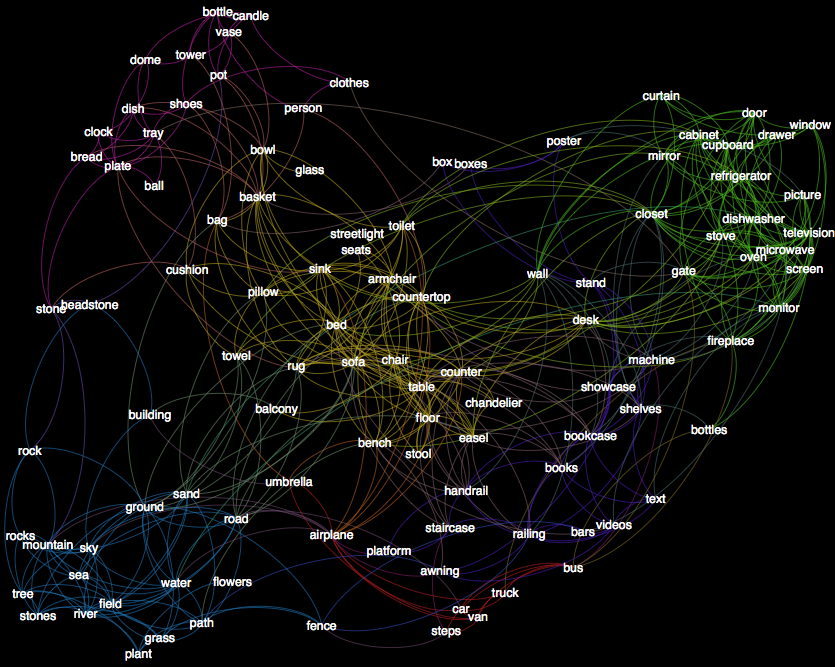

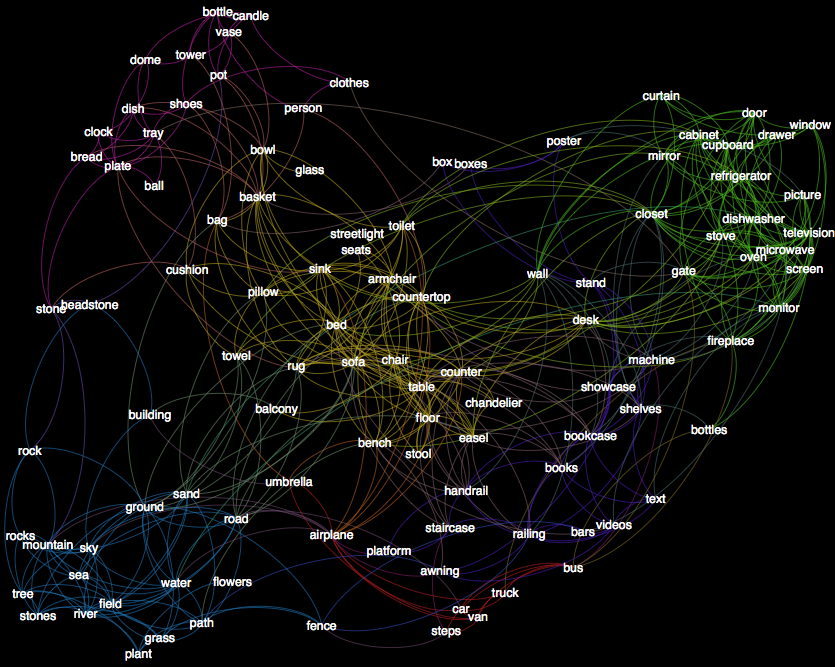

A

continuous category space is discovered by shared models

across 107 categories on the SUN dataset. Although the

models are generated in a totally unsupervised manner on

PASCAL, it is interesting to show that visually or

functionally similar categories are naturally grouped

together: such as the green cluster of television,

monitor, stove, microwave, oven, dishwasher, blue cluster

of sea, river, field, sky, and red cluster of car, bus,

van, truck, etc.

Acknowledgements

This work was supported by AWS in Education

Coursework Grant award.

System

Pipeline

During unsupervised hyper-training phase, a large library of object detectors informative across categories is generated. Their ratings on different detection tasks are recorded to form a ratings store. For a new target task or category, using ratings of a small probe set of detectors on its input task with limited samples, recommendations are made by collaborative filtering. A usable object detector for this new task is thus rapidly generated as single or ensemble of the recommended models.

Average performance of ensemble PBC model recommendation with varied input task size over 107 categories on the SUN dataset. Ensemble of PBC models works consistently well as the size of the recommended model increases, as shown in the six curves. Using only a small number of images to select models generated from out-of-domain dataset by unsupervised hyper-training, it achieves comparable or better performance to several strong baselines with in-domain data by supervised training.

The same model is informative across different tasks. For five representative models (left to right), we show detection on sample images (top to bottom). Note that the models learned by unsupervised hyper-training are similar to attribute detectors. For example, the first column corresponds to all staircase -like objects with vertical, horizontal, inclined, curved orientations (bottom to top). This attribute-like behavior explains the generality of the models for new input tasks.

A continuous category space is discovered by shared models across 107 categories on the SUN dataset. Although the models are generated in a totally unsupervised manner on PASCAL, it is interesting to show that visually or functionally similar categories are naturally grouped together: such as the green cluster of television, monitor, stove, microwave, oven, dishwasher, blue cluster of sea, river, field, sky, and red cluster of car, bus, van, truck, etc.

This work was supported by AWS in Education Coursework Grant award.